Module 2 Formstorming

Sarah's Weekly Activity

Sarah Al-Fkeih | Project 2: Time and Data

Project 2

Module 2

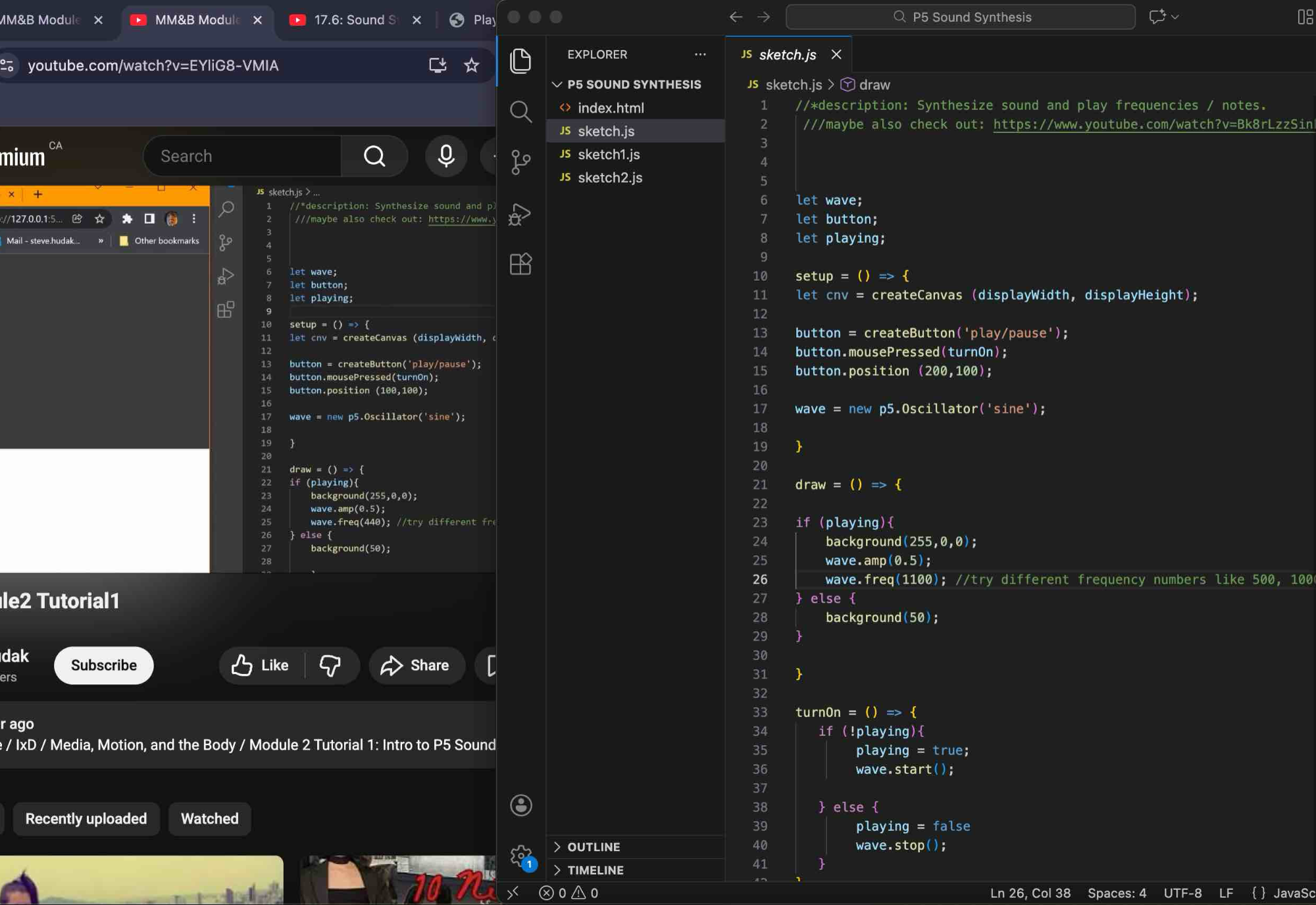

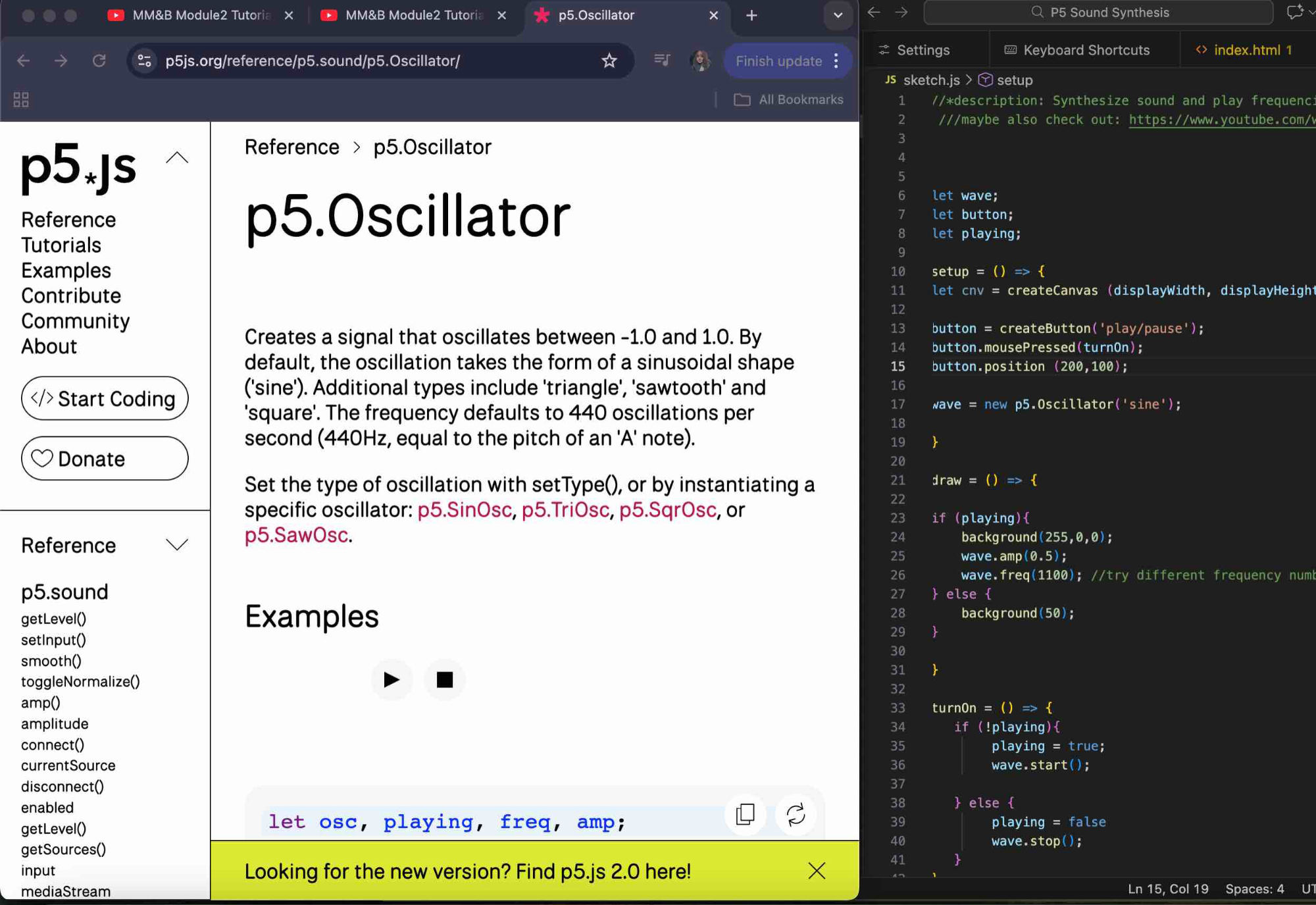

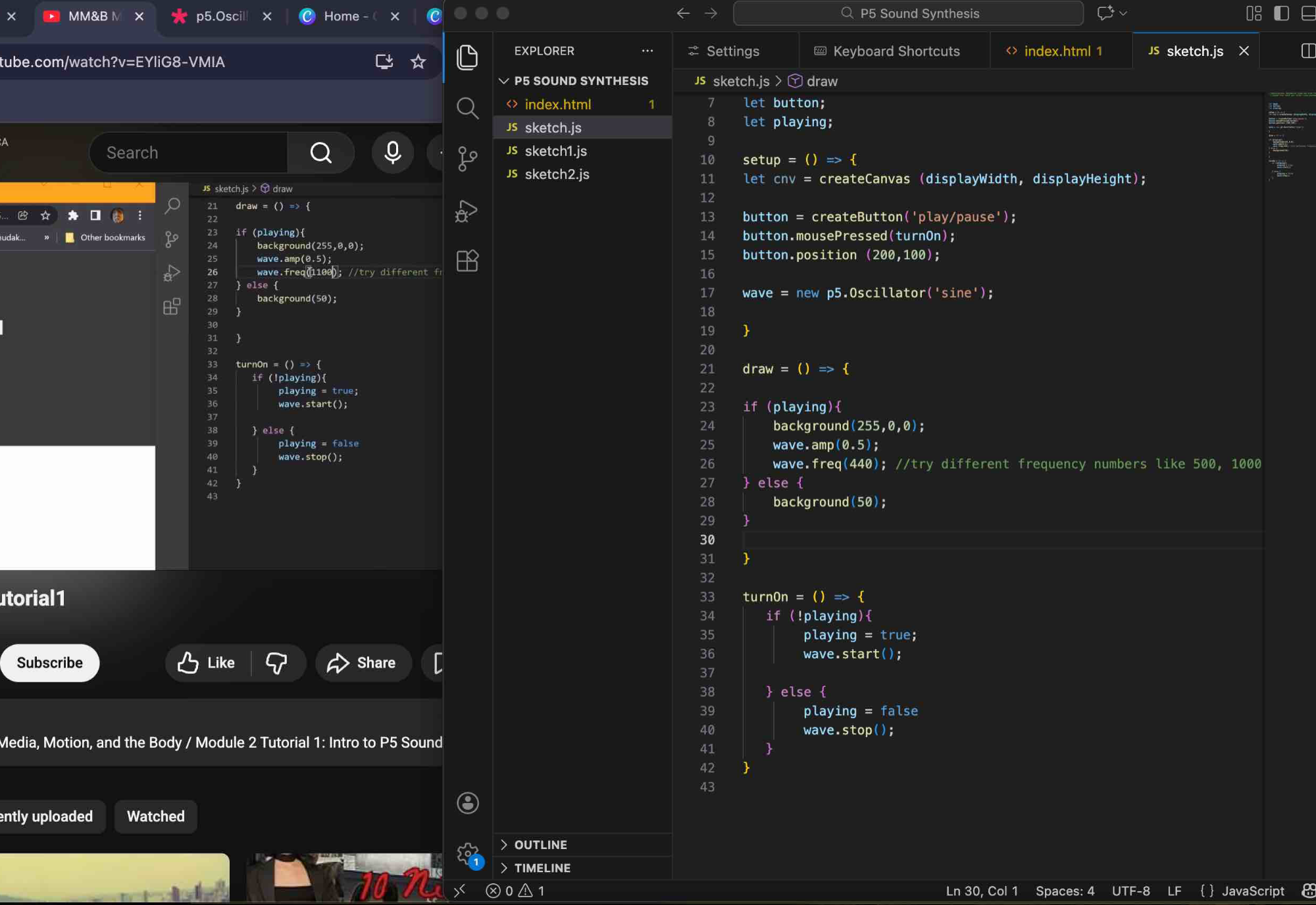

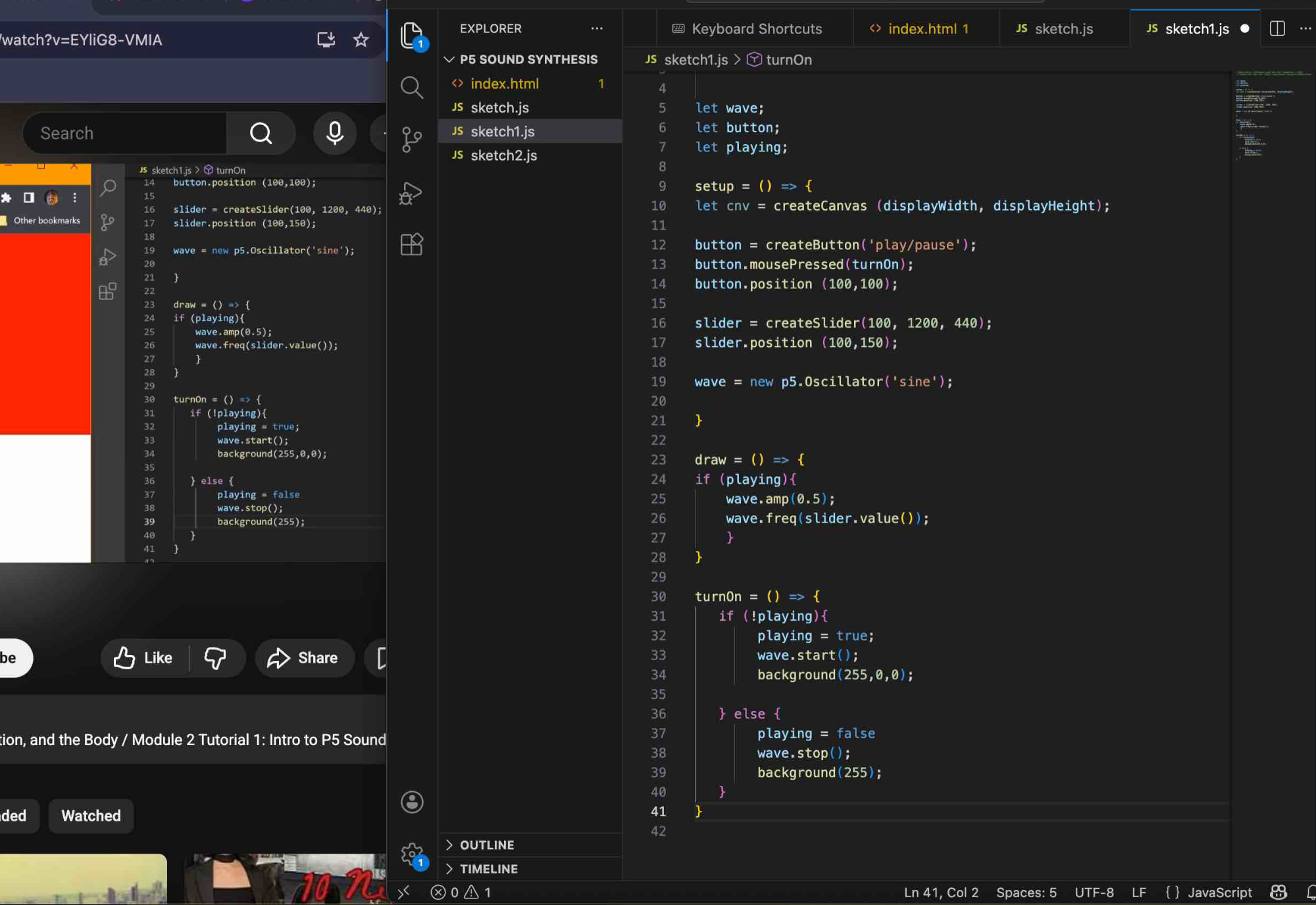

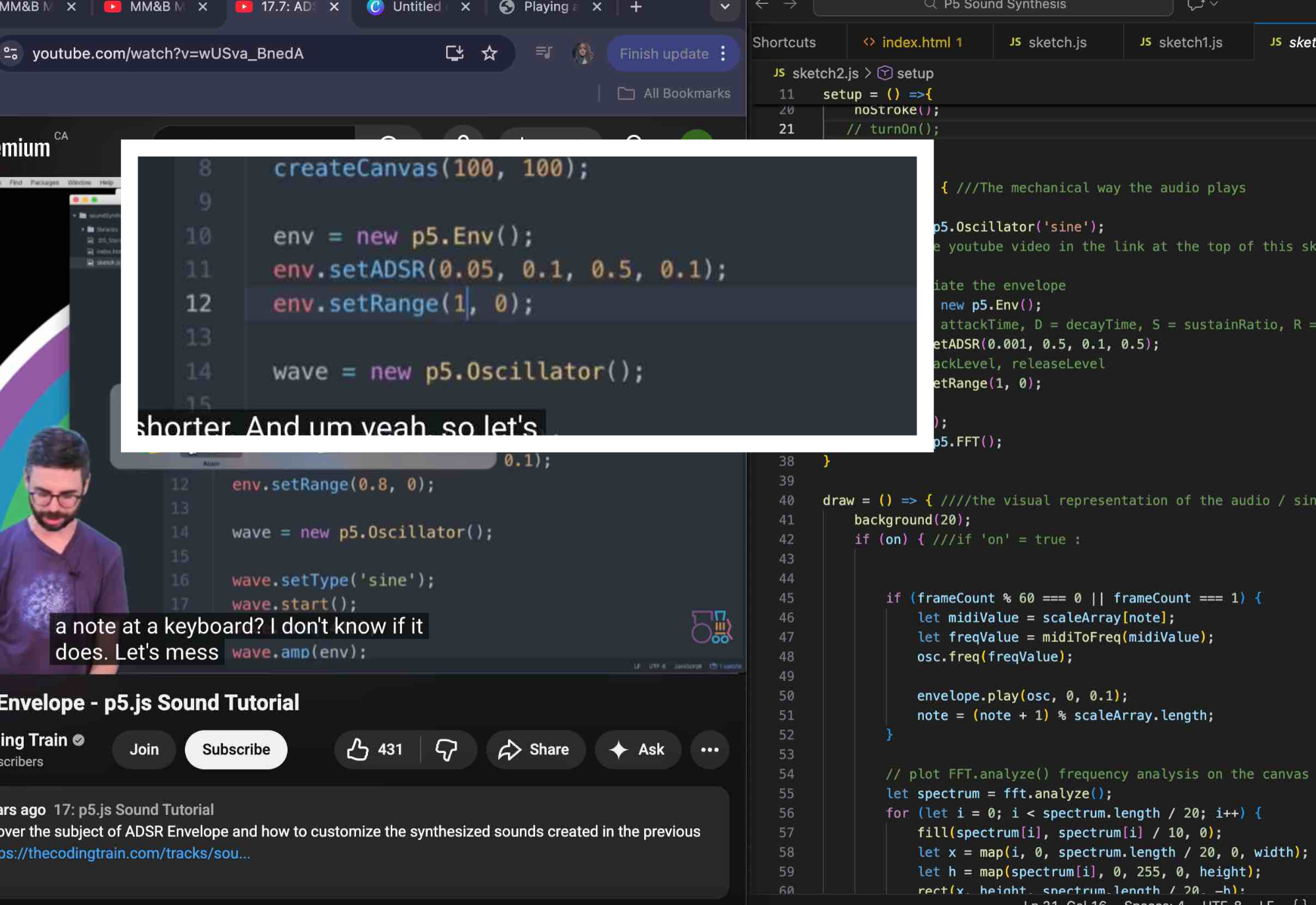

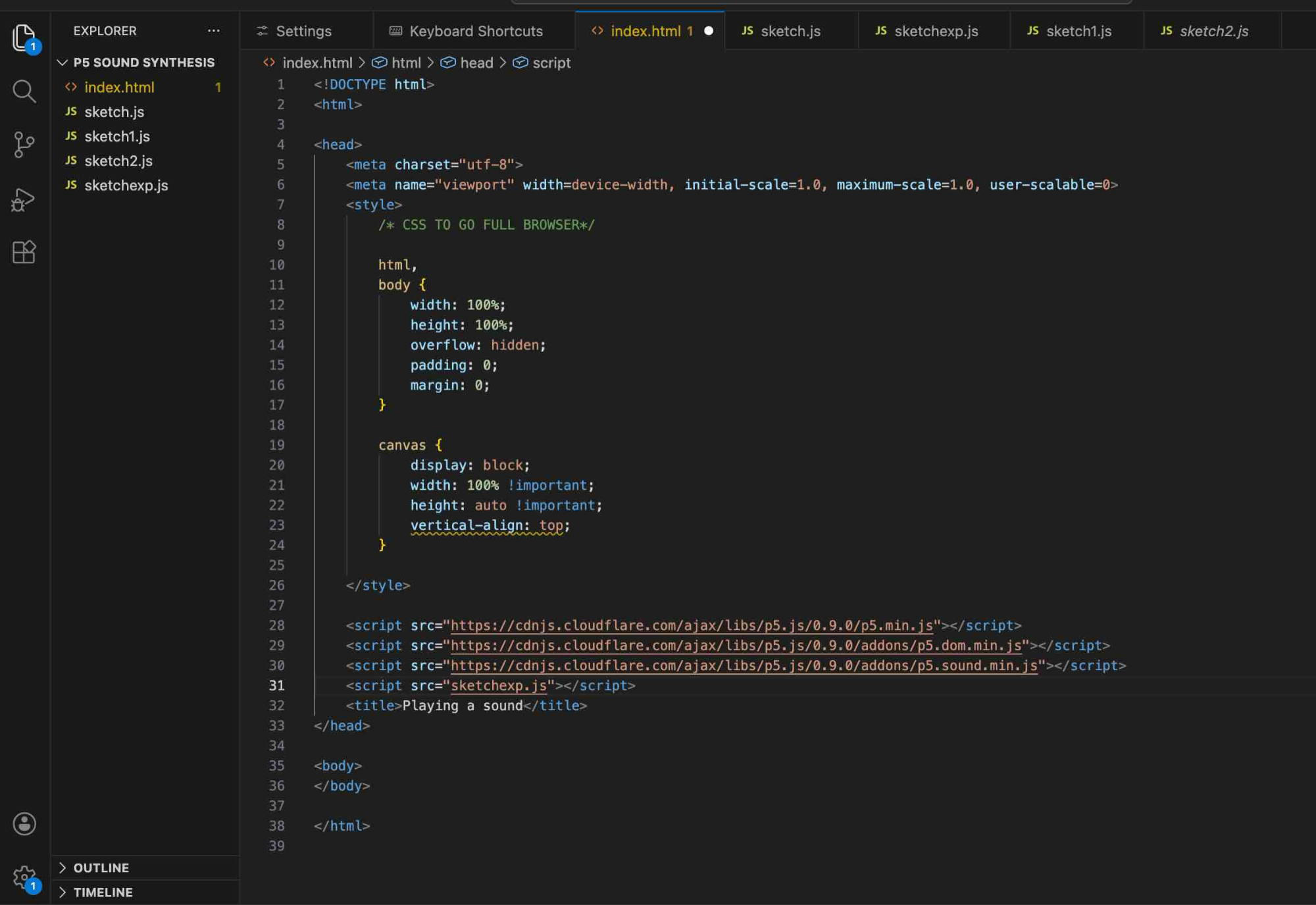

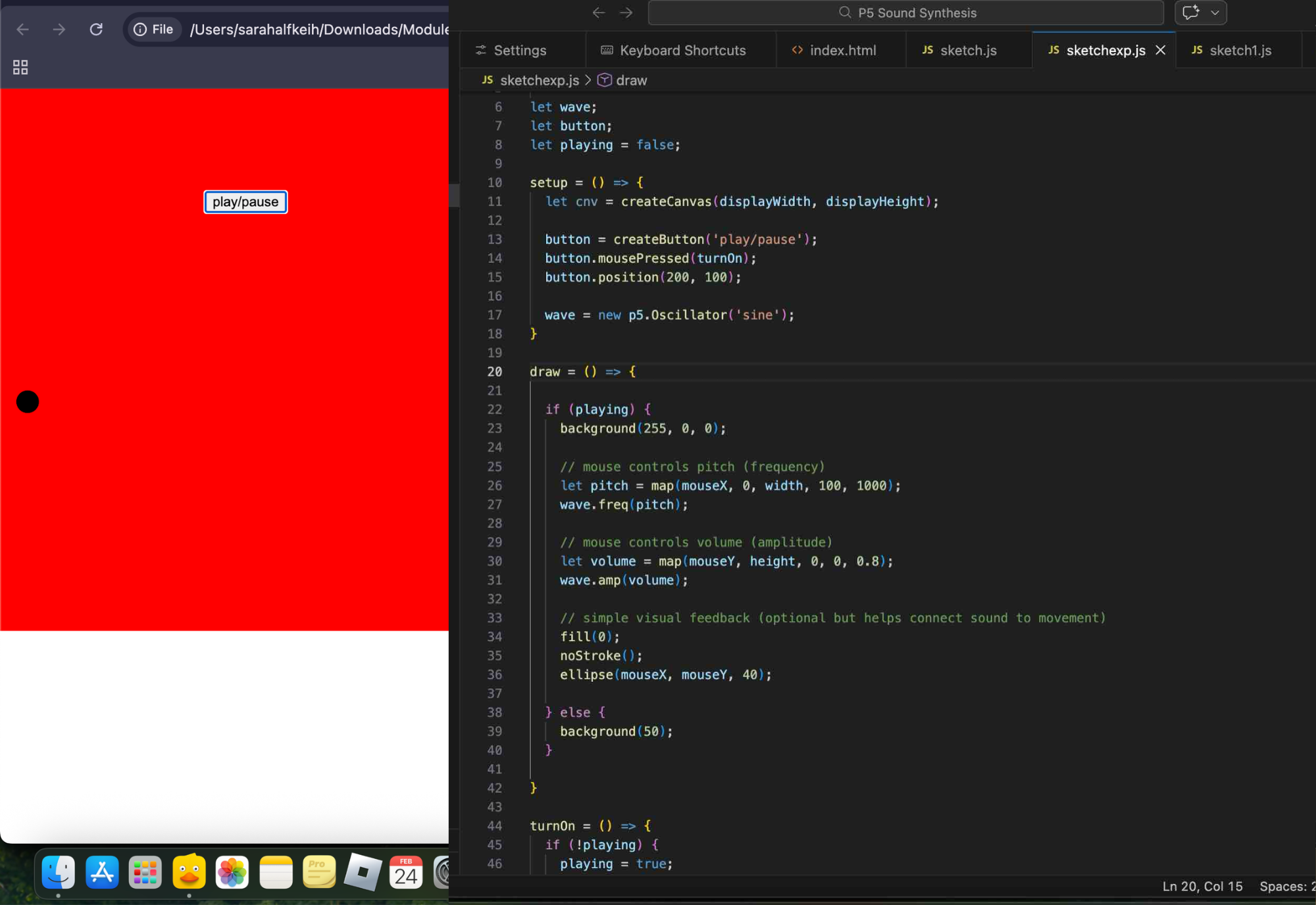

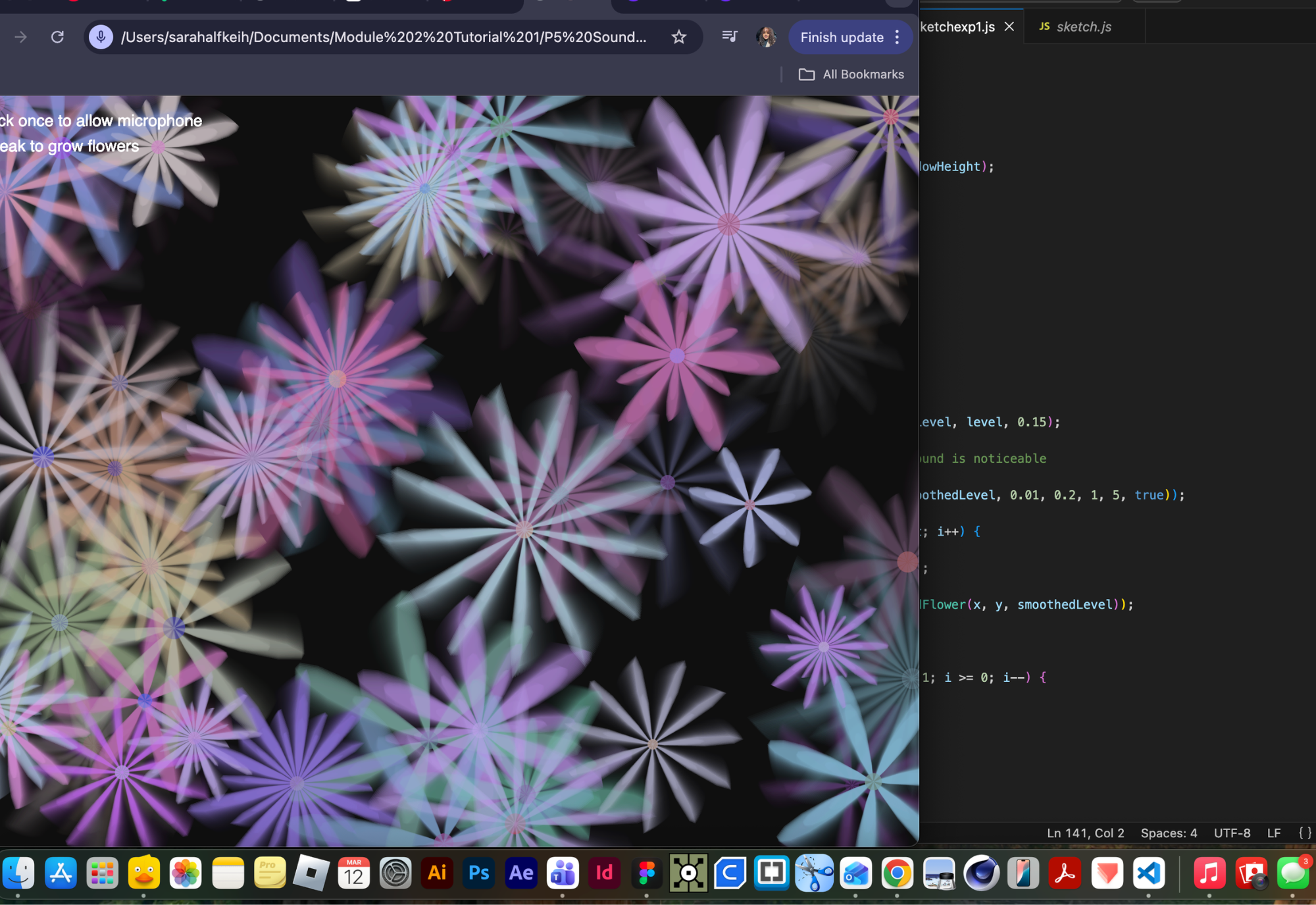

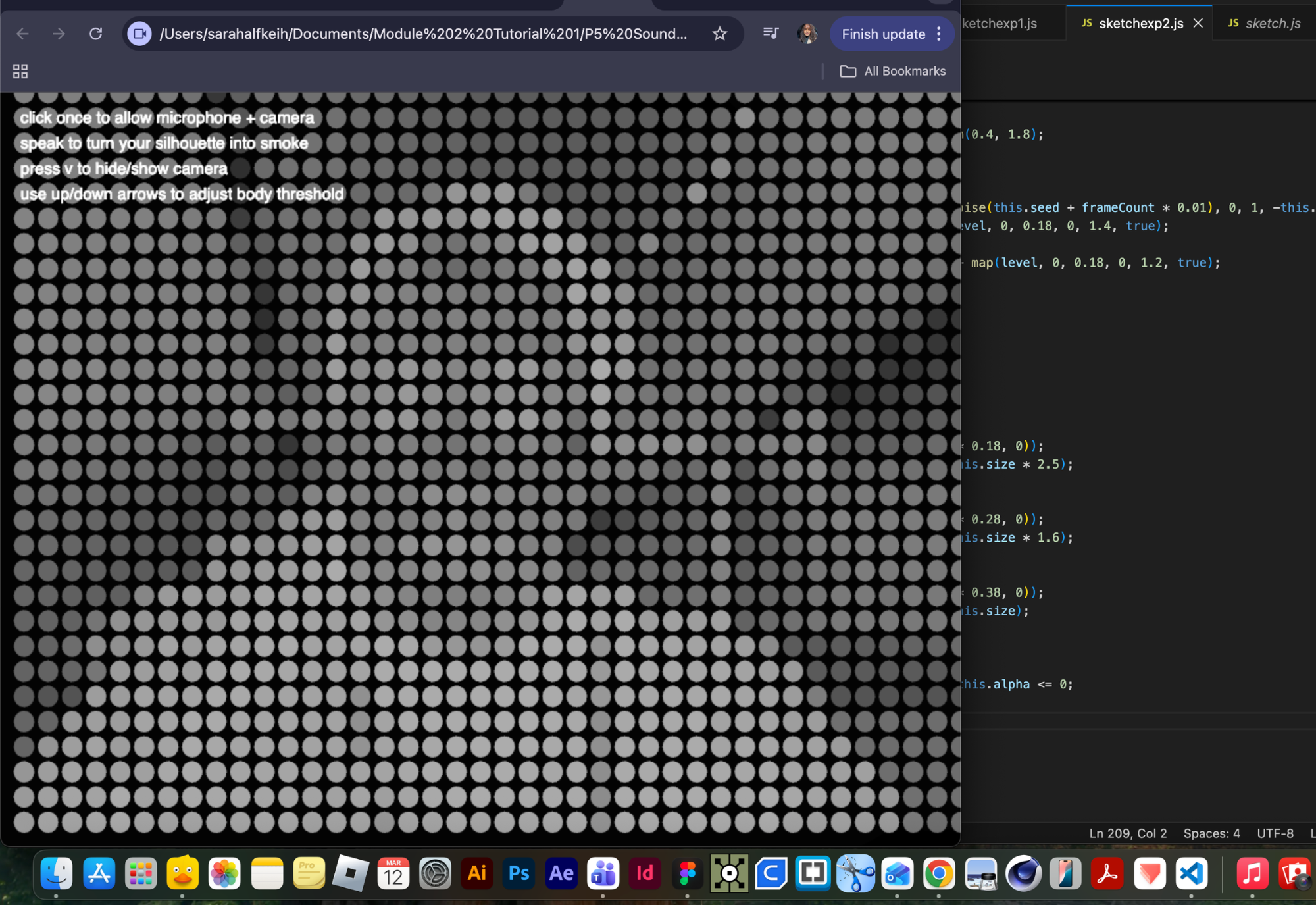

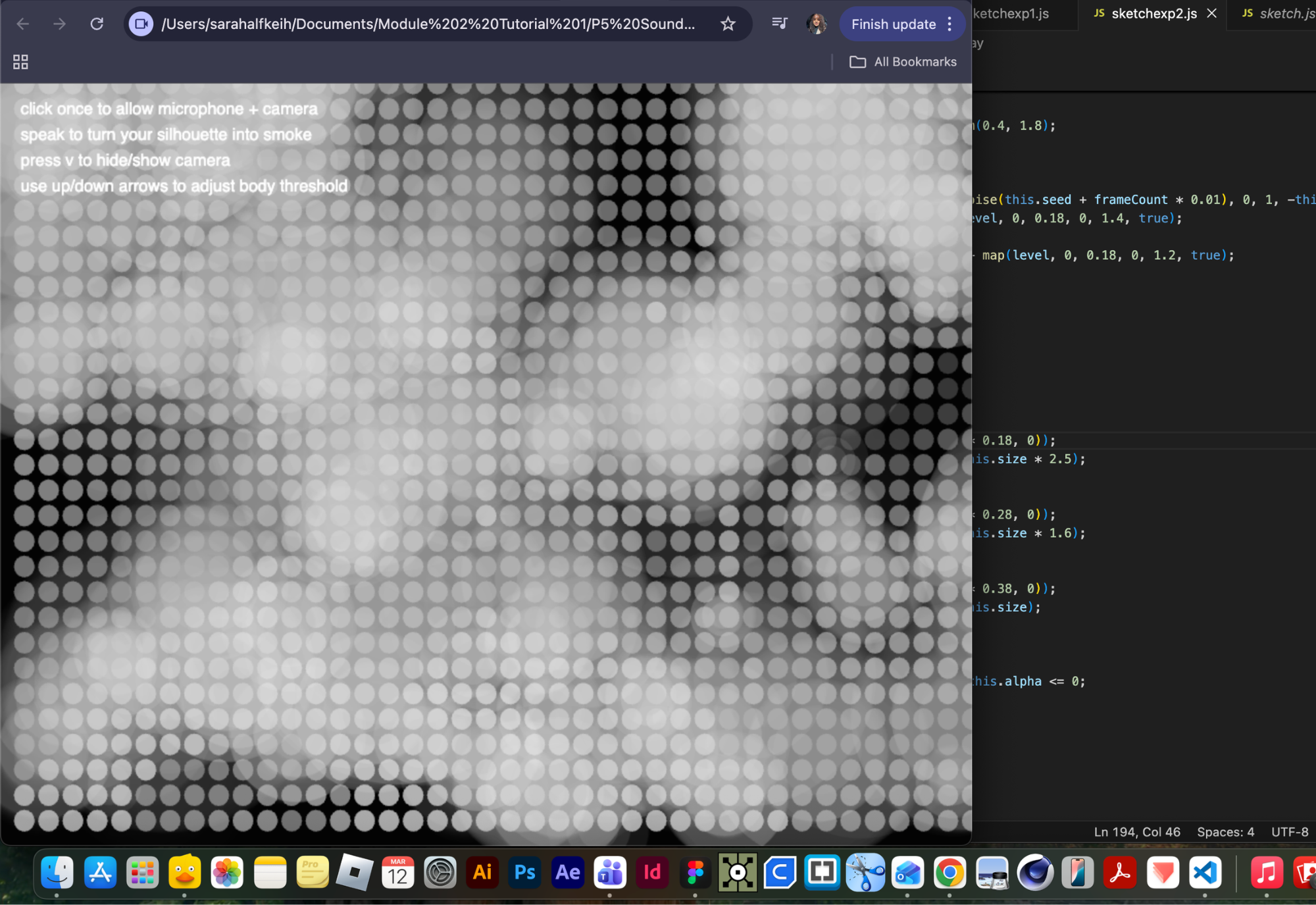

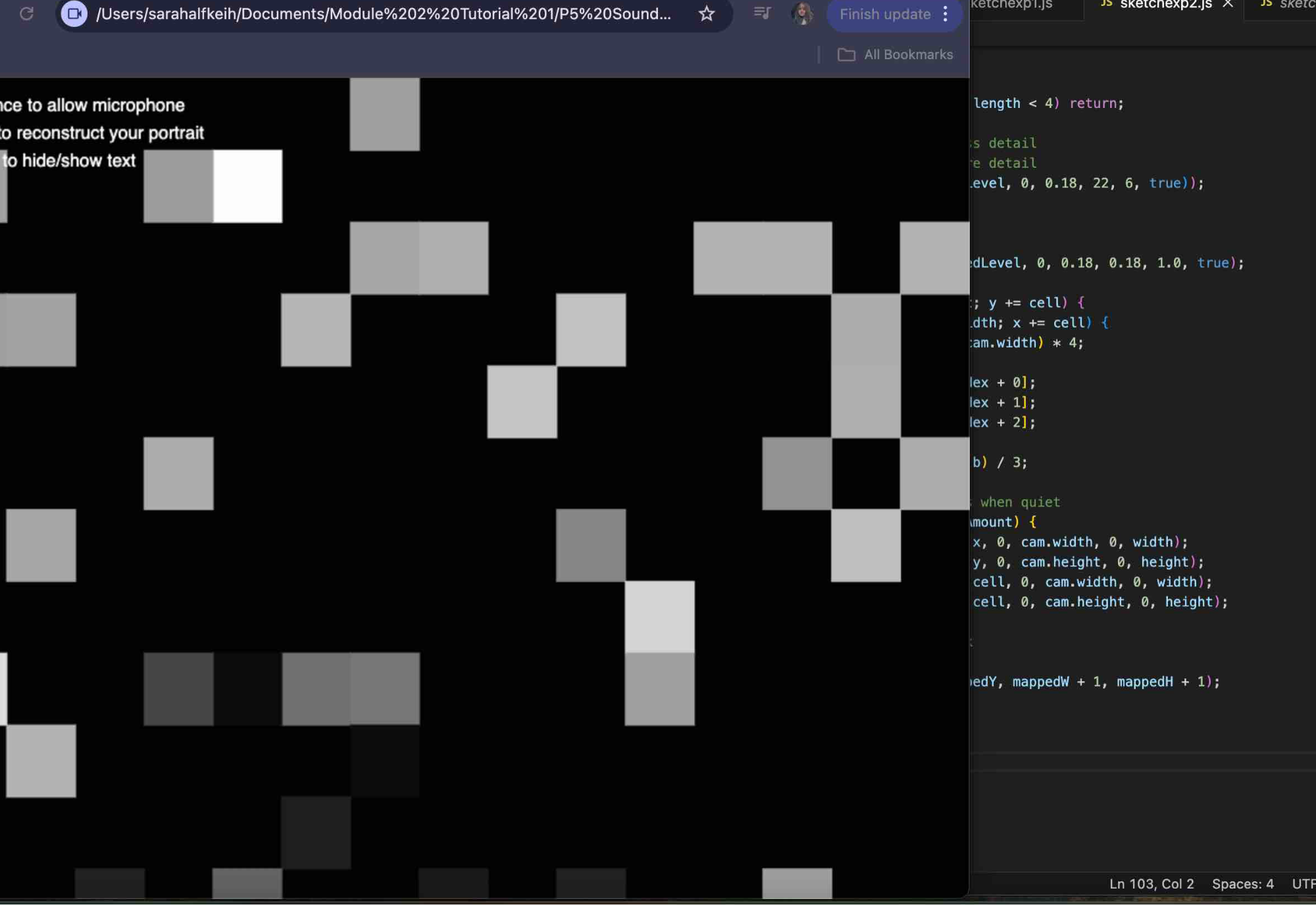

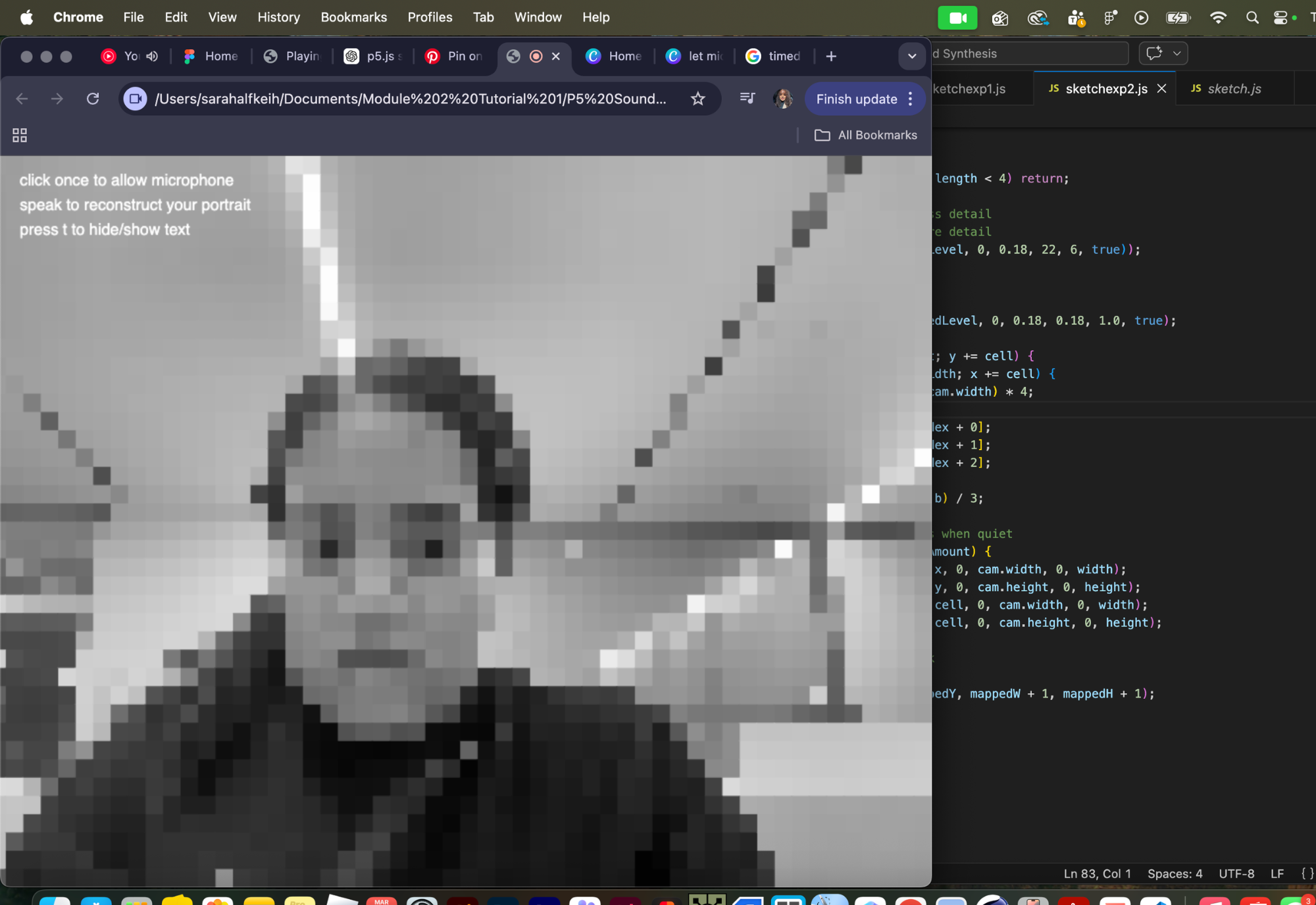

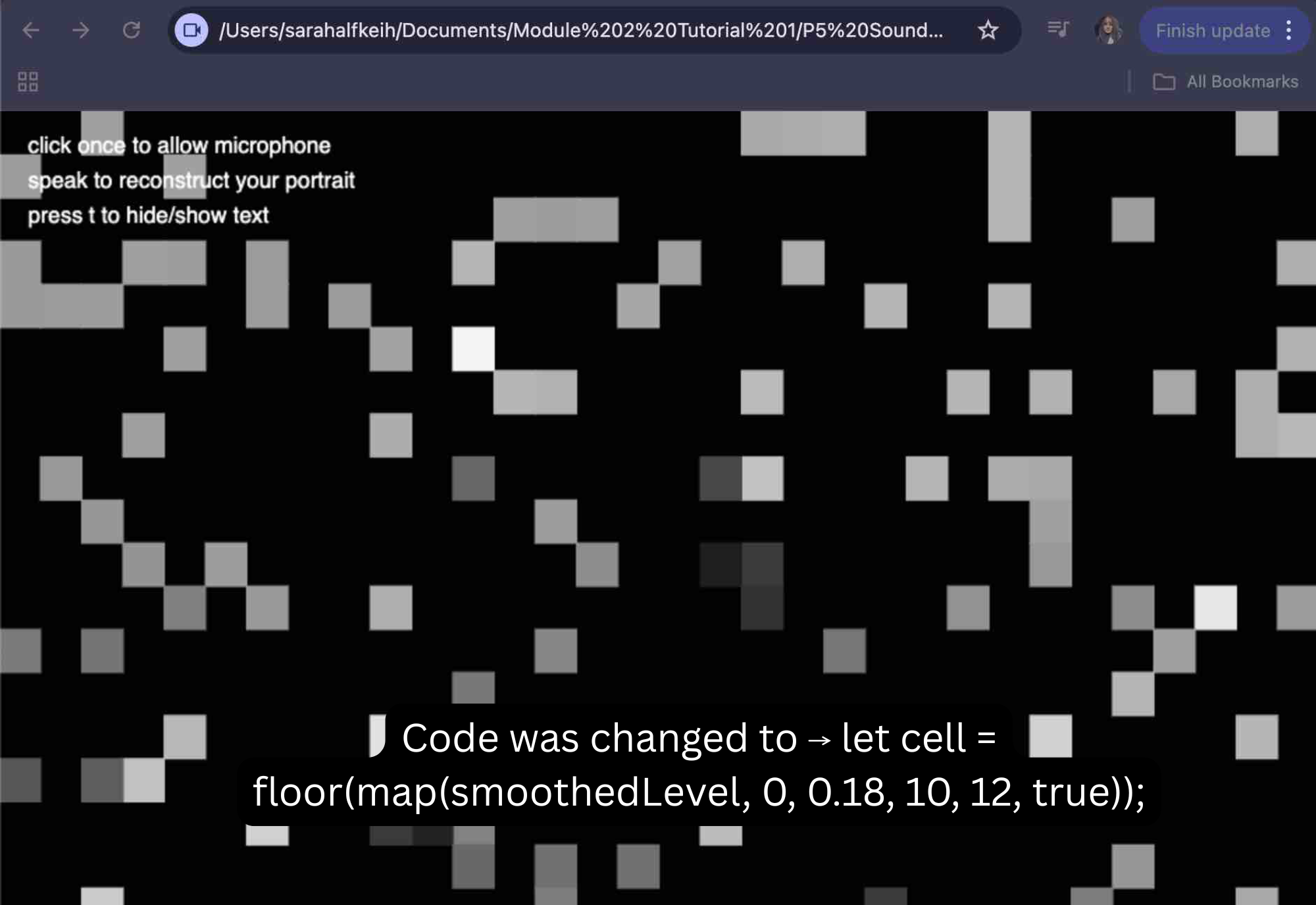

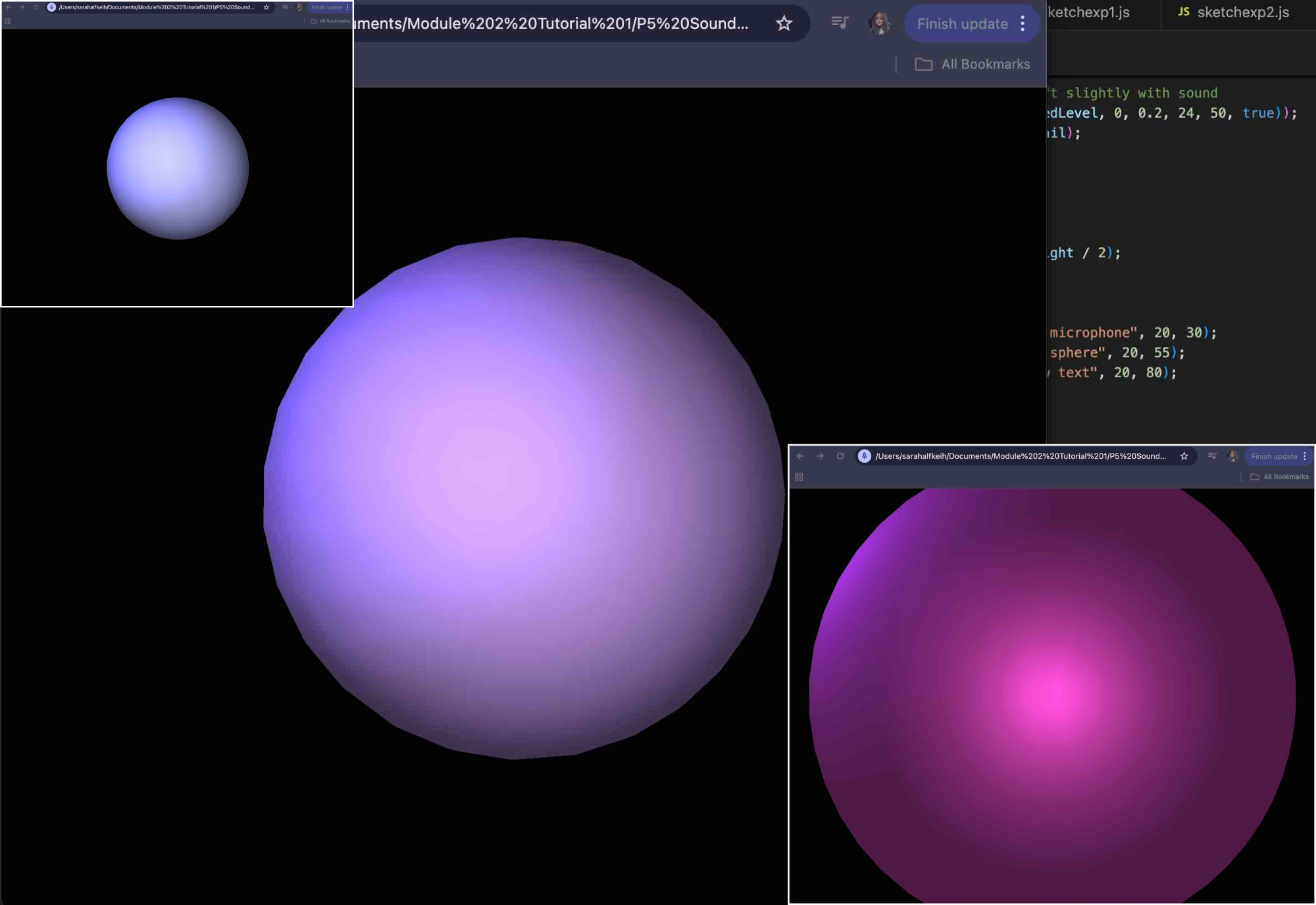

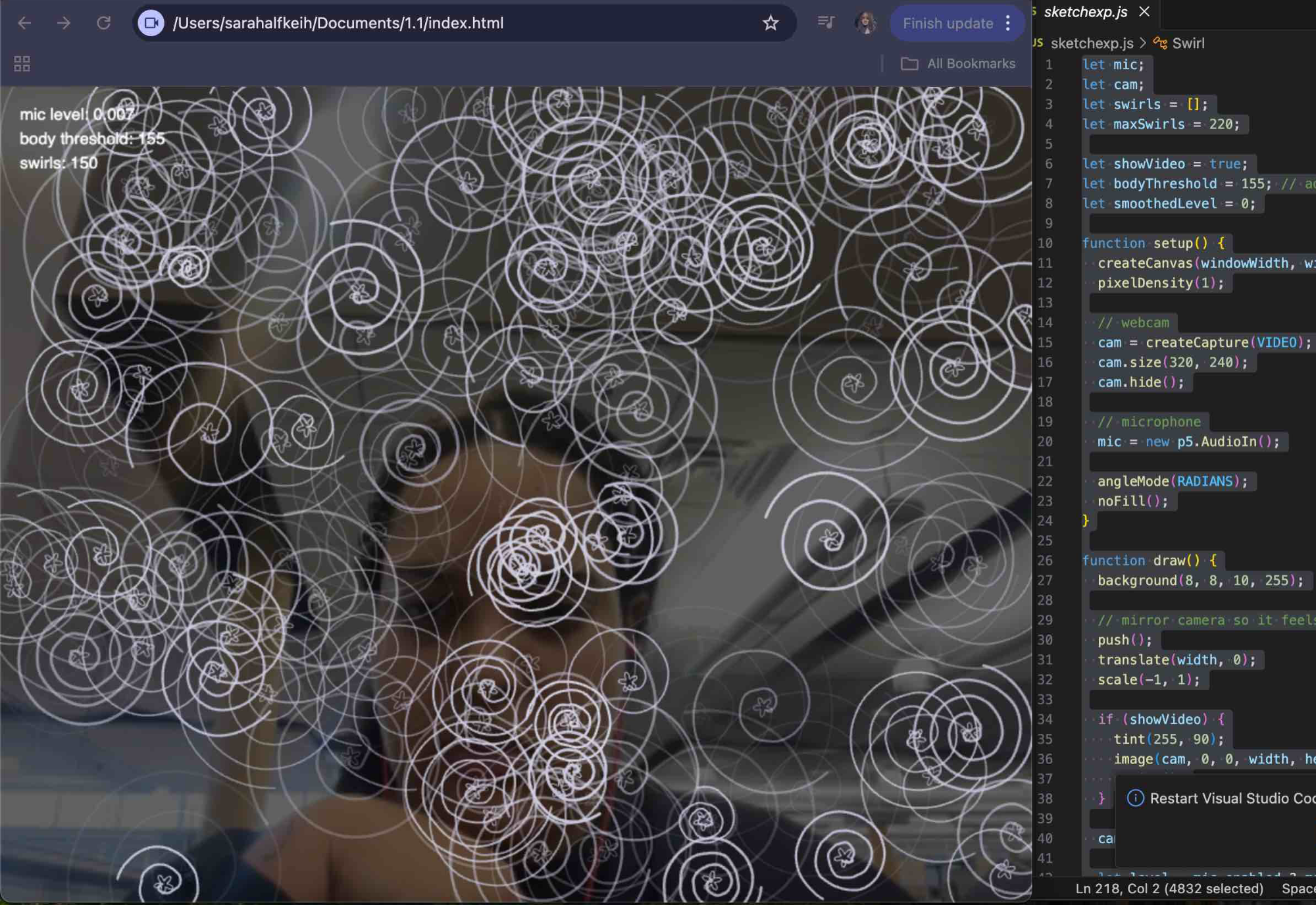

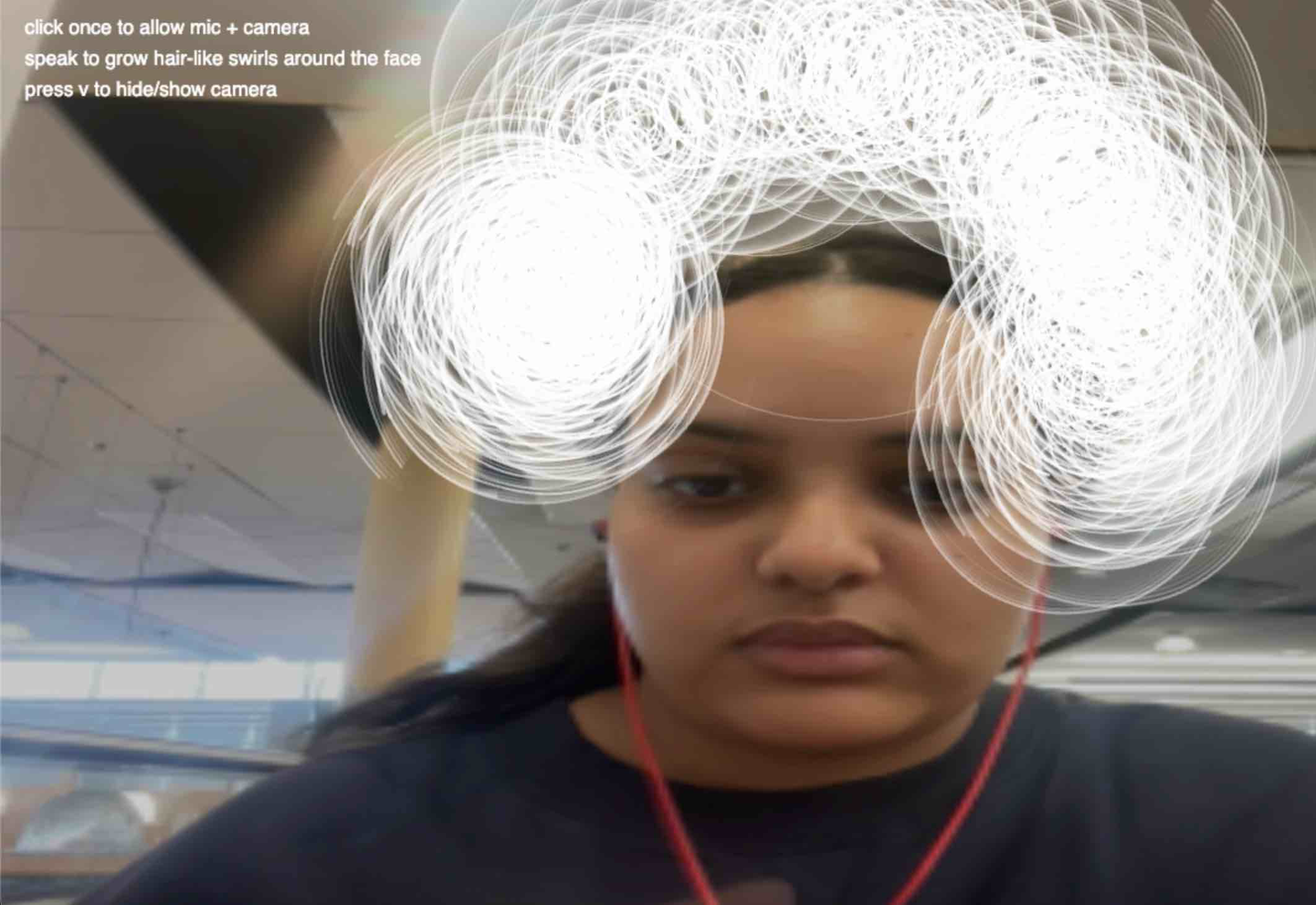

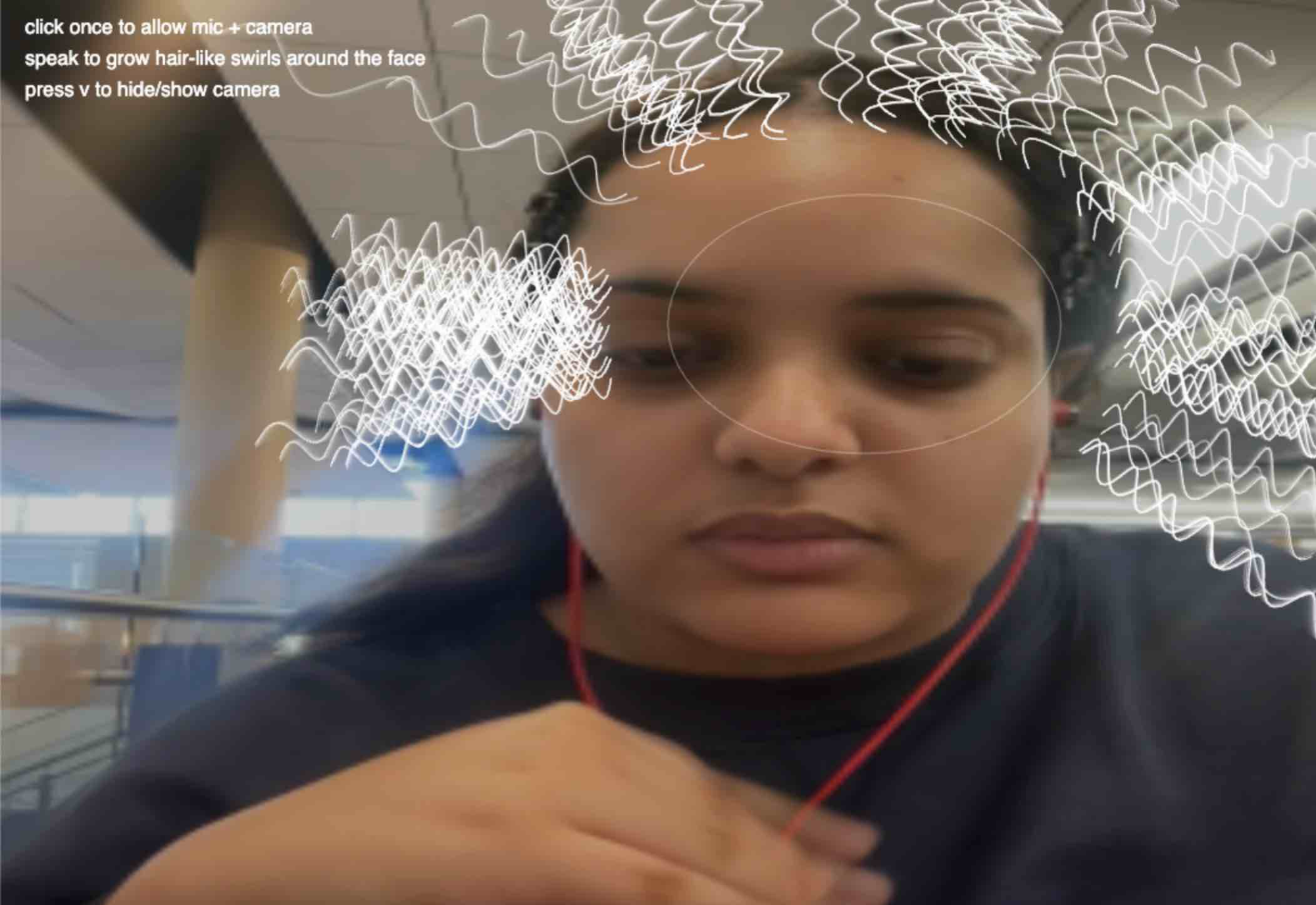

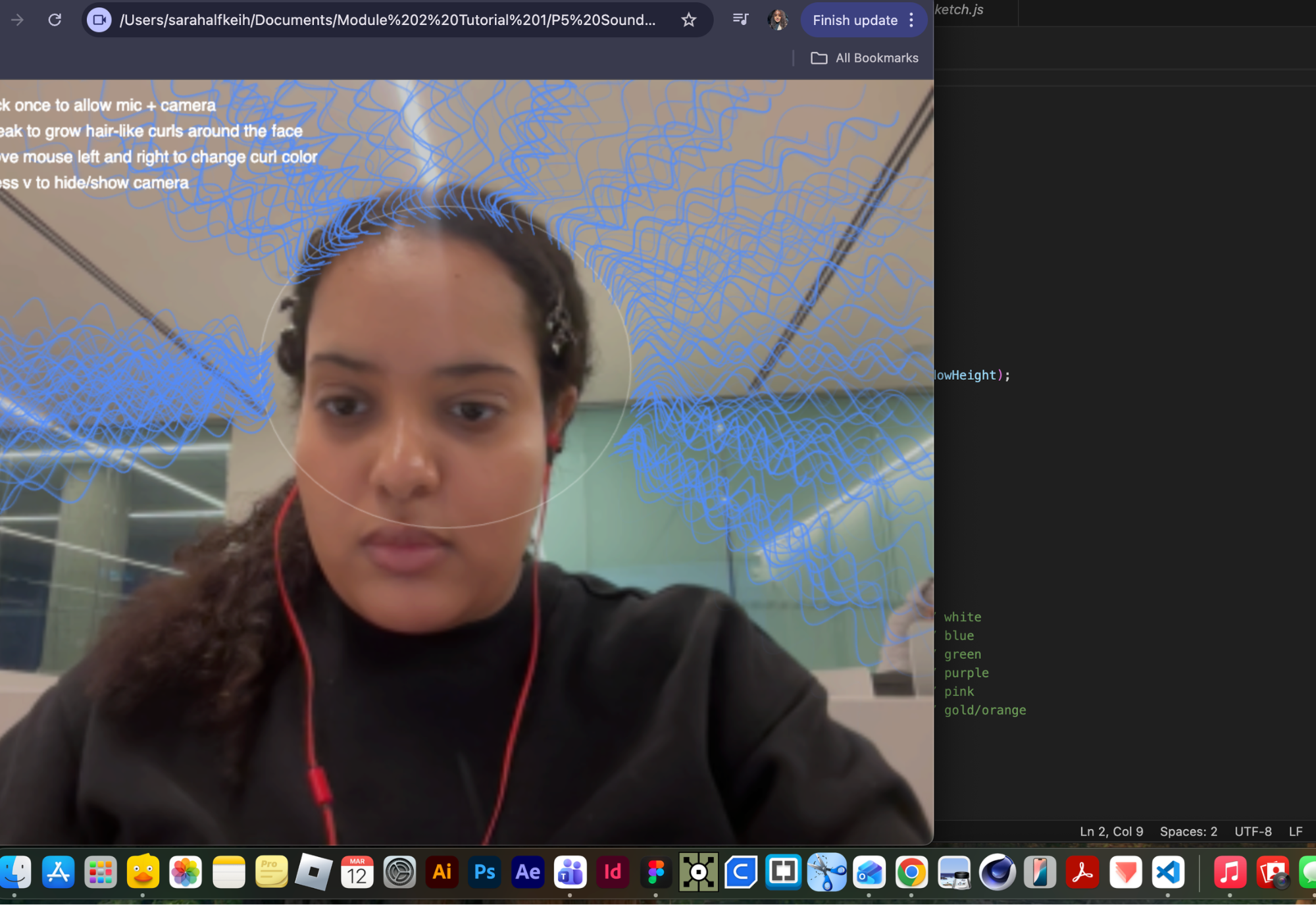

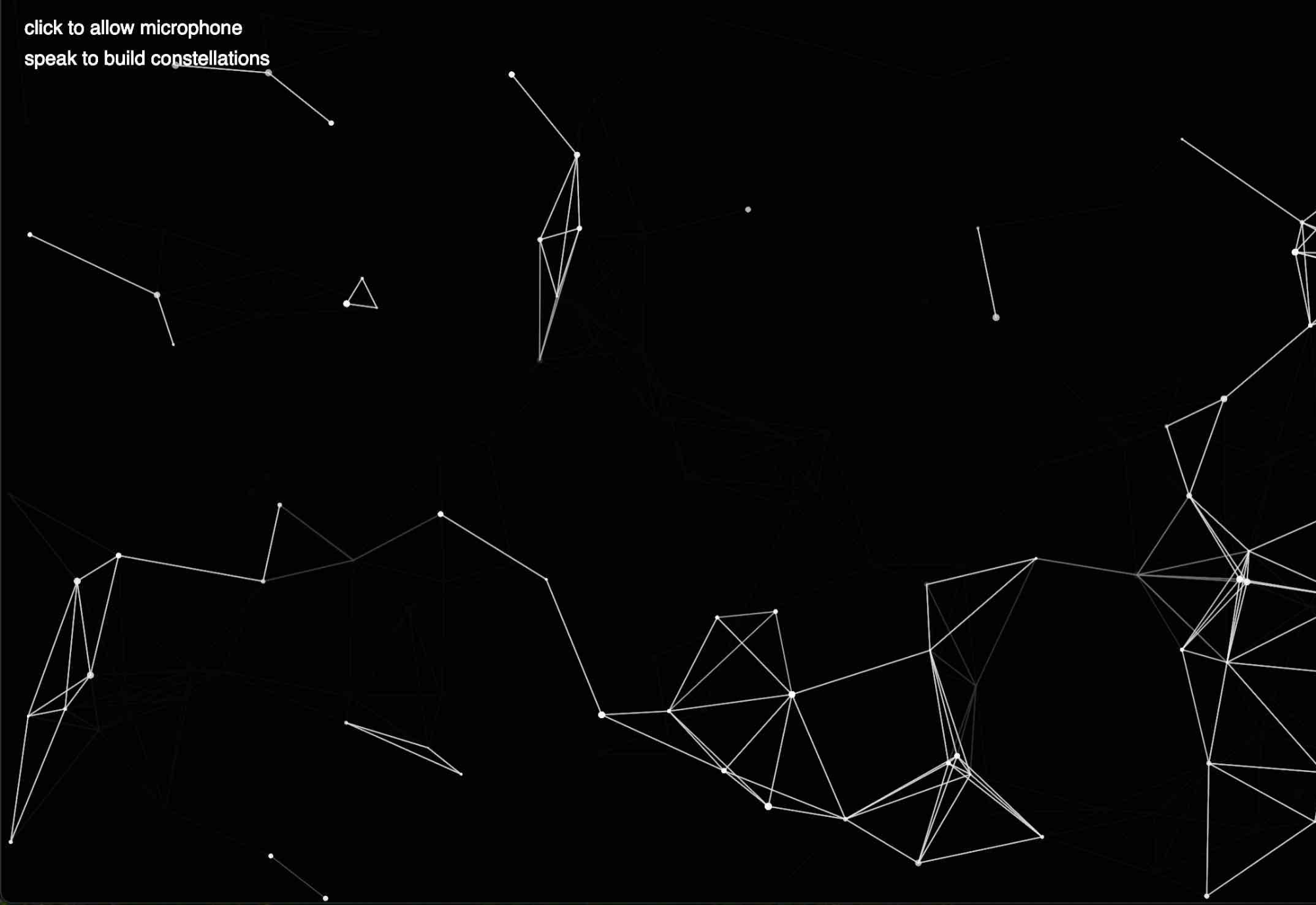

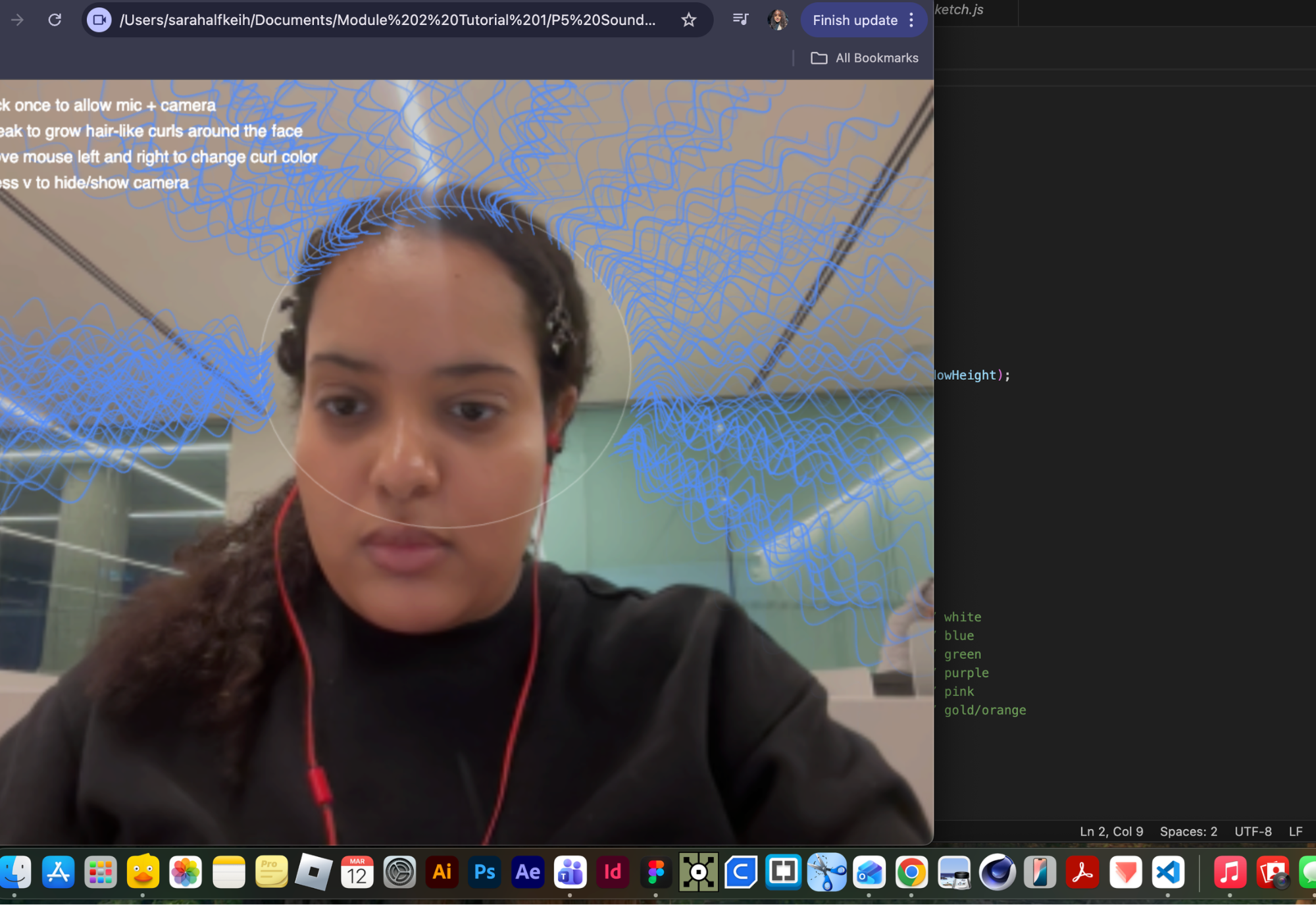

For Activity 1, I worked with my group to record sounds around campus, but I also wanted to follow my own idea by recording in my home, specifically my bathroom, since it’s where I get ready every morning. I was interested in the idea of customization—how I change and present myself through things like makeup and hair—so I focused on capturing sounds in that personal space alongside more public campus recordings. In Activity 2, I built on this by experimenting with p5 sketches, exploring how sound and the microphone could connect to that idea of self and control. I tested both voice-controlled interactions and mic-reactive visuals to see different ways sound could shape an experience. I then brought these explorations into my portfolio to show both my process and how my concept developed from recording to interaction.

Activity 1

Activity 2

Project 2

Final Project 2 Design

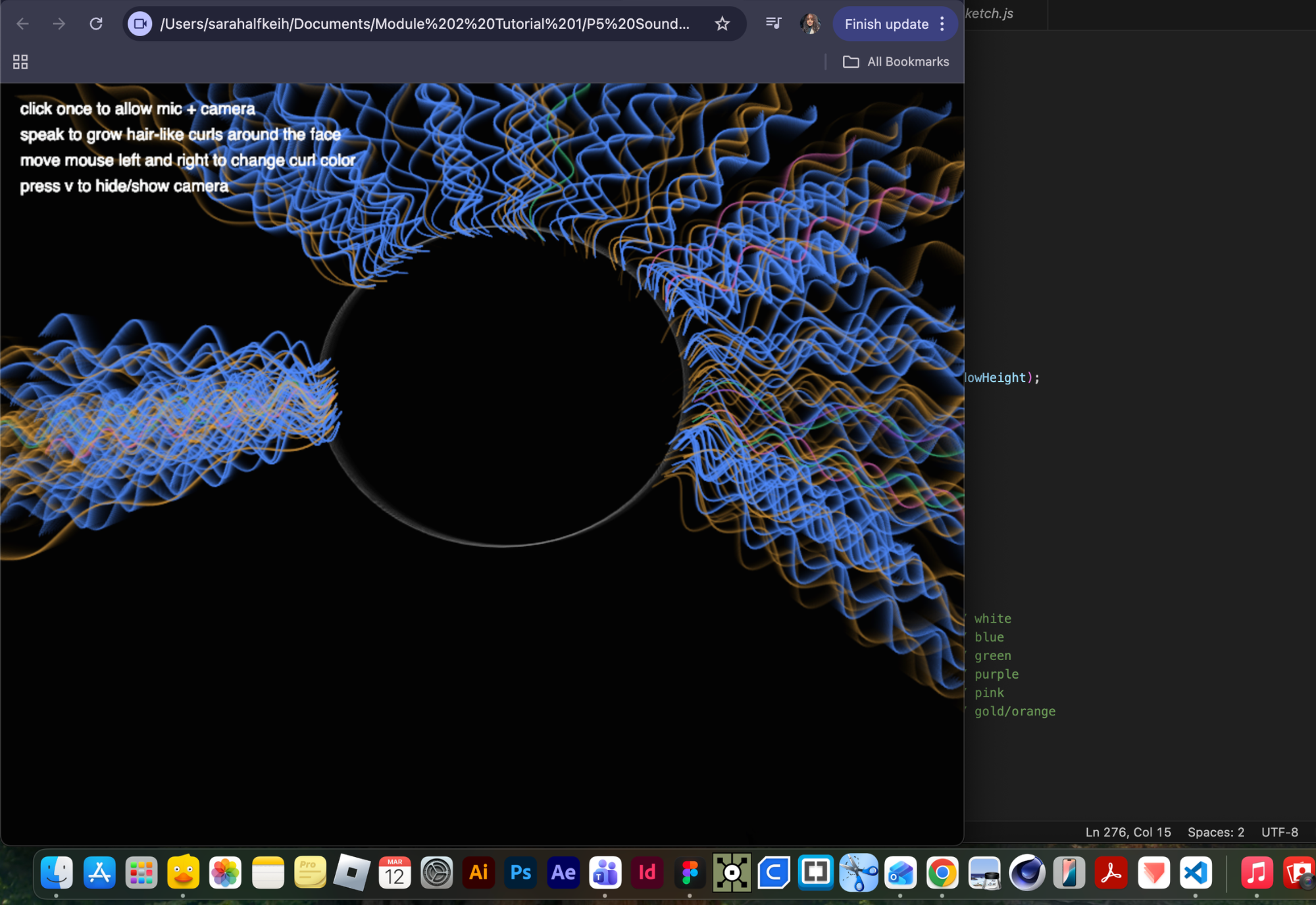

P5 Interactive Audio Web Header Portfolio

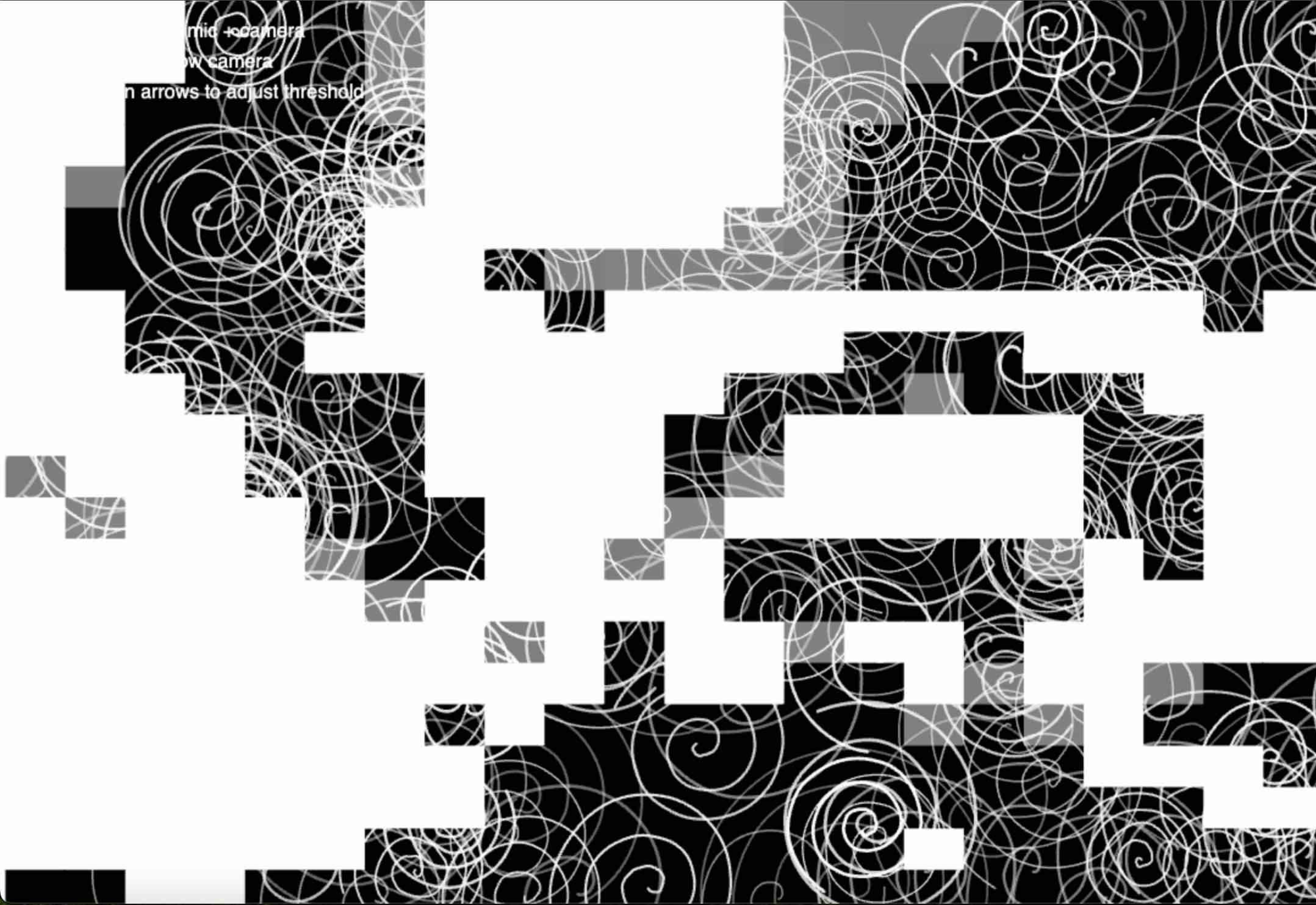

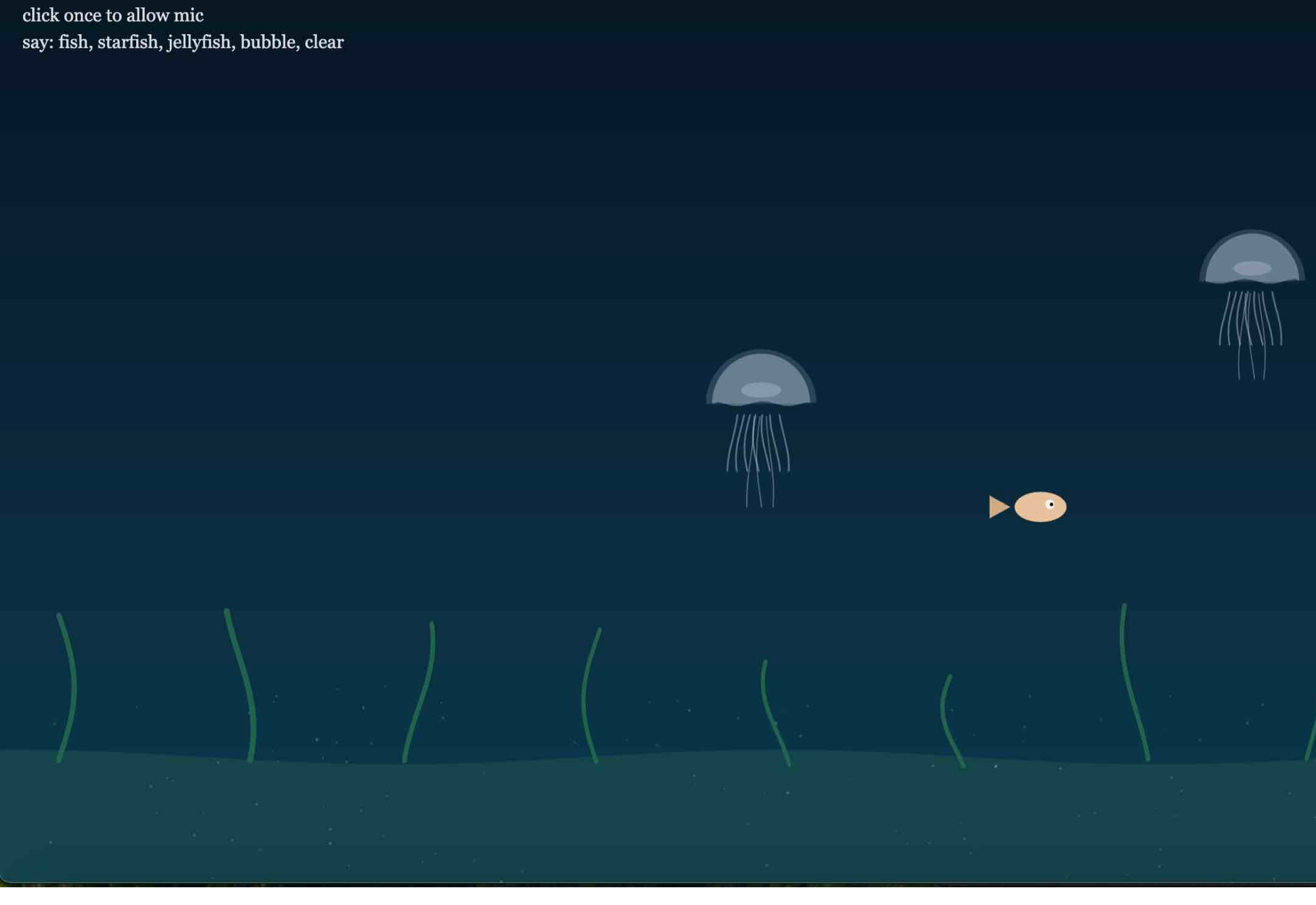

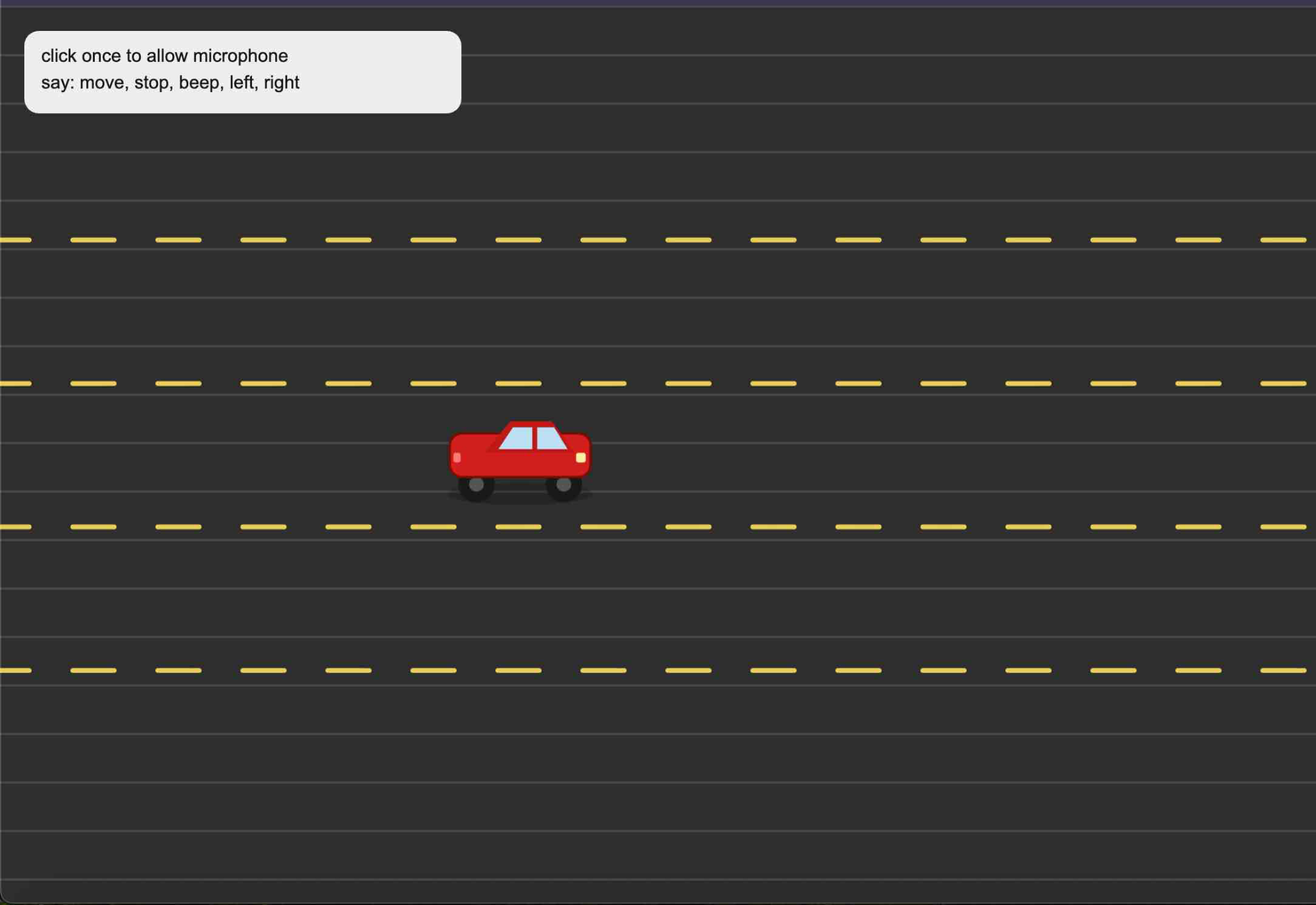

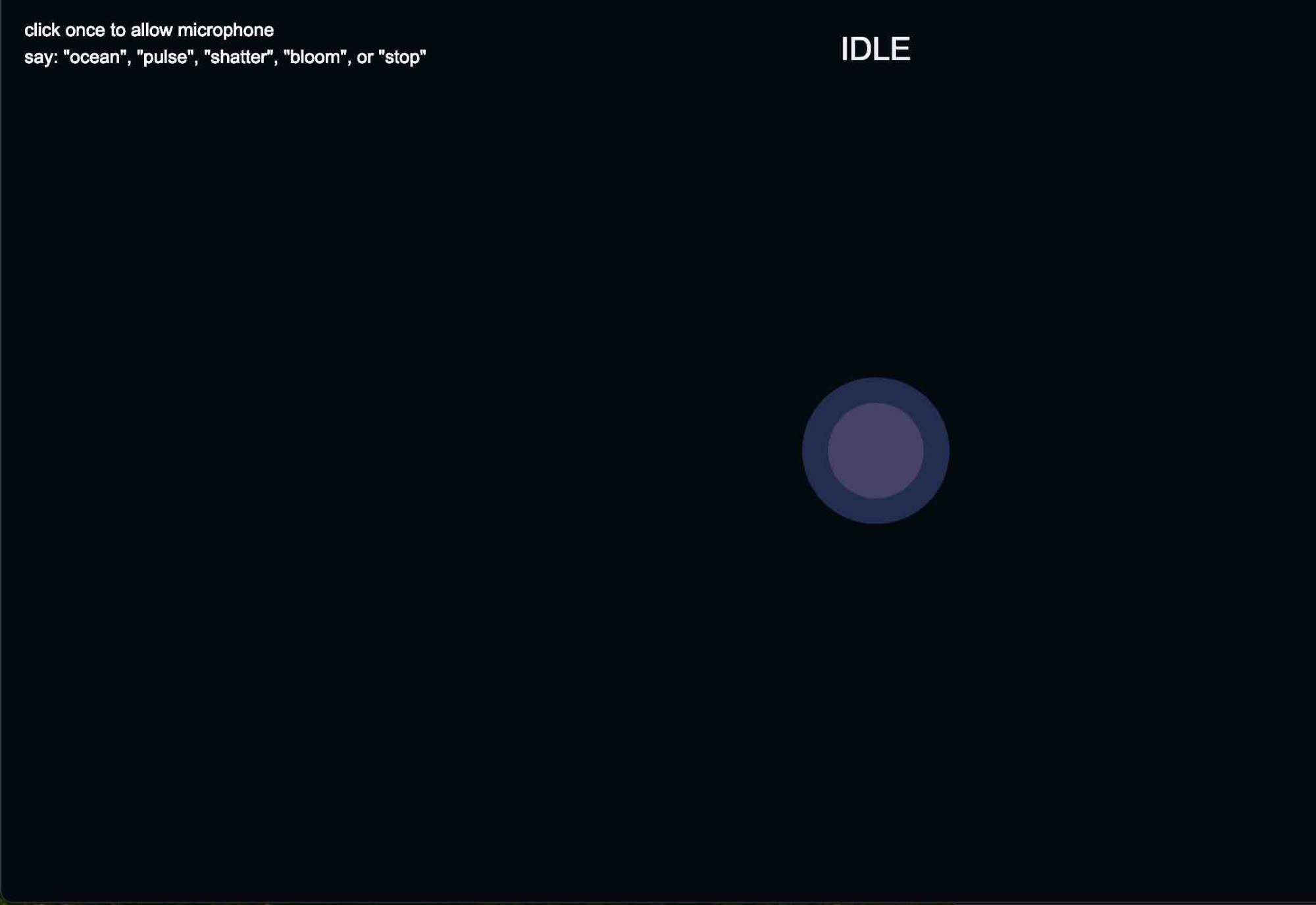

My portfolio explores playful interaction using the microphone as the main input. I experimented with how voice and sound could directly control visuals in p5.js, such as a driving interaction where a car responds to commands like “move,” “stop,” “left,” “right,” and “beep,” turning speech into a form of control instead of using a keyboard or mouse. I also explored mic-reactive visuals by using p5.js sound libraries like p5.AudioIn(), p5.Amplitude(), and p5.FFT() to map sound data to elements like shape size, movement, and animation. In another experiment, I used speech recognition to turn spoken words into live subtitles on screen, allowing sound to become both input and output. All concepts and interactions were created by me through experimentation, while AI was only used as a technical support tool for debugging and improving my code, not for generating ideas. I settled on my final idea with the curl interaction because it felt like the strongest and most natural way to connect my concept of self-customization with sound. Compared to my other experiments, this idea was more personal and intuitive, since it relates to my everyday routine of getting ready and styling my hair. The curling motion also translated well into a visual and interactive experience, making it easy to control and understand through the microphone. It balanced both play and meaning—I was still exploring voice and sound as input, but in a way that felt connected to identity and routine rather than just abstract interaction.

Click here to see it working on my server

Powered by w3.css